|

10/7/2023 0 Comments Maml meta learning

It attempts to tackle the vanishing and exploding gradient problem that is typically seen during MAML training. One-step MAML is trained with the aim of achieving an effective accuracy score in presence of limited amount of data. One-step MAML is a variation of MAML where training is conducted using inner and outer loops. In this paper, a novel meta-learning method called one-step MAML is developed and applied in the field of meta-learning. However, MAML++ still preserved certain drawbacks such as large memory consumption, longer training speed and poor optimization for larger size of network parameters. These losses are then weighted and accumulated which is used to update the meta weights of base network. MAML++ accounts for the instability of MAML by calculating the loss on the target set at every inner loop. This problem is tackled and solved in the approach known as MAML++. The main flaw of MAML is that the performance and stability of this method varies greatly when the number of parameters is substantially increased from a base network. In the outer loop, the objective function is used to attain the optimum parameters that can generalize to similar sets of tasks. In the inner loop, MAML tries to guide the model in such a way that the lowest value for the training loss is achieved for a particular task. Both the training loops try to achieve good performance using different objectives.

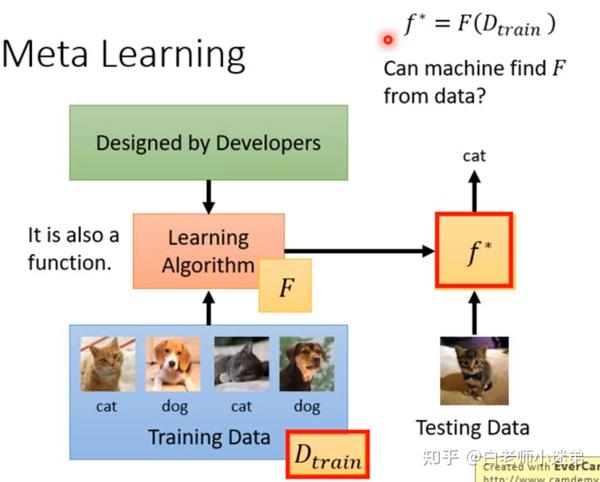

MAML conducts the training procedure using two loops, which are known as the inner loop and the outer training loop. This method can be used in classification, regression and in reinforcement learning. MAML was created with the goal of teaching the base network to be more versatile and adaptive to more than one tasks. One of the variants in meta-learning is known as Model Agnostic Meta-Learning (MAML). This great stride of meta-learning has been made in the field of few-shot learning from emerging models and training methods such as Siamese networks, matching networks and memory augmented networks. As a result, meta-learning is also known as“learning to learn” mechanism that can enable the new model design to rapidly learn new tasks or adapt to new environments with a few training examples. It applies metadata using a two-loops mechanism to guide the training efficiently to learn the patterns with the least number of training samples. Meta-learning is an alternative solution to train the network with fewer examples to achieve accurate task performance using metadata.

It is also observed that this combination reaches its peak accuracy with the fewest number of steps.Īdapting to the scarcity of samples during training is an essential trait to improve the learning capability of a deep learning model on specific tasks. The performance of evaluation shows that the best loss and accuracy can be achieved using one-step MAML that is coupled with the two-phase switching optimizer. Several experiments using the BERT-Tiny model are conducted to analyze and compare the performance of the one-step MAML with five benchmark datasets. At the outer loop, gradient is updated based on losses accumulated by the evaluation set during each inner loop. During the inner loop, gradient update is performed only once per task. One-step MAML uses two loops to conduct the training, known as the inner and the outer loop. As a variant derived from the concept of Model Agnostic Meta-Learning (MAML), an one-step MAML incorporated with the two-phase switching optimization strategy is proposed in this paper to improve performance using less iterations. To counter such an issue, the field of meta-learning has shown great potential in fine tuning and generalizing to new tasks using mini dataset. **MAML**, or **Model-Agnostic Meta-Learning**, is a model and task-agnostic algorithm for meta-learning that trains a model’s parameters such that a small number of gradient updates will lead to fast learning on a new task.Conventional training mechanisms often encounter limited classification performance due to the need of large training samples.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed